Organic Visibility Is the New Bid Floor

What actually shipped in OpenAI's May 5 launch, and why the brands who skipped AEO are about to discover the bill in the ad auction.

OpenAI launched self-serve ads last Monday.

The press covered the news…almost no one covered what actually shipped.

Folks have been asking me variations of the same question this month: should we be paying for ChatGPT placements yet? I keep giving different answers, because the variable that matters isn’t whether the ad product works. It’s how visible the brand already is organically.

What OpenAI rolled out on May 5 was the full infrastructure for commerce inside ChatGPT, built quietly over the last 12 months and now ready to run…and it made organic visibility into the most important driver of paid economics on the most important new channel in B2B.

If you only look at the ad product, you miss what’s happening to the rest of the surface.

The launch in one list

Here’s what went live on May 5:

→ Self-serve Ads Manager (beta, US)

→ CPC bidding, $3-5 max bid recommended

→ Pixel-based measurement and Conversions API

→ Programmatic Advertiser API

→ Agency partners: Dentsu, Omnicom, Publicis, WPP

→ Tech partners: Adobe, Criteo, Kargo, Pacvue, StackAdapt → $50K minimum eliminated

→ Live in US, Canada, AU, NZ; expanding to UK, Mexico, Japan, Brazil, South Korea

→ Run by Asad Awan (monetization) and Dave Dugan (ex-Meta, ad sales)

This is the core Google and Meta ad infrastructure rebuilt for a conversational surface in 90 days. Three months between the Feb 9 pilot launch and the May 5 self-serve opening.

That speed only happens when the underlying systems have already matured. Shout to Alex Halliday and the AirOps team for shipping same-day support for ChatGPT Ads measurement and attribution. AEO platforms don’t move at that pace if they don’t believe this is now infrastructure rather than experiment.

The leadership matters too. Asad Awan owns monetization. Dave Dugan, ex-Meta, owns ad sales. Above them, Fidji Simo runs OpenAI’s Applications business. She’s the executive Sam Altman hired specifically to turn ChatGPT into a commercial product. None of these people were brought in to test whether ads work. They were brought in to scale a business.

OpenAI is targeting $2.5B in ad revenue for 2026 and $100B by 2030. They crossed $100M annualized in the first six weeks of the pilot. Less than 20% of eligible users are currently seeing ads, which means inventory is about to expand massively as the auction matures and the self-serve flywheel spins up.

This is not a side experiment. Ads are now the third revenue line at OpenAI, alongside subscriptions and enterprise. It’s the newest one, and it has the steepest growth curve of the three.

What the data is actually saying

Most of the coverage of ChatGPT ads has been built around the wrong numbers.

The story that dominated last month was Adthena’s report that one of their clients saw a 0.91% click-through rate on ChatGPT ads, roughly seven times below the 6.4% benchmark on Google Search. That number got cited in every “ChatGPT ads aren’t working” piece for three weeks straight.

Then Similarweb published their benchmark last week. The aggregate CTR for ChatGPT ads is 0.68%. Top quartile is 1.0%. Best brands are hitting 1.57%. Peak is 5.4%.

For context: display ads sit at 0.35%. Podcast ads run 0.5-1%. LinkedIn Ads, which premium B2B advertisers happily pay $40+ CPMs for, average around 0.5-2% depending on objective.

ChatGPT is already outperforming display in CTR. Before the auction has any real maturity. Before the relevance model has been trained on billions of impressions. Before any best-practice creative or targeting frameworks exist.

To be fair, the display comparison isn’t perfectly clean. Display runs cold across the open web, often with no context match. ChatGPT ads run inside high-intent conversations where the user is already in research mode. The numbers aren’t apples to apples. But even allowing for the context gap, ChatGPT is clearing a benchmark most people assumed it would fail at this stage.

The “users are in task-completion mode so CTRs will stay low” thesis isn’t wrong. It’s wrong at the top end of the distribution. Brands that get the placement right are already hitting numbers most assumed would take years.

Conversion data matters more than CTR for performance marketers. Criteo reported that LLM-referred users convert at roughly 1.5x other referral channels. Conversion rates on ChatGPT ads run between 1-4% on average. It’s early, the measurement is improving but still aggregated, and the ceiling is much higher than the headline criticism suggests. Brands going early are getting cheap impressions in front of users with deeper intent than any other surface.

That’s the paid story. The organic story is more interesting.

The shift no one is naming

AirOps published research last week that’s the most important AEO finding of 2026 so far. Over a 16-week window from December through March, they tracked 170 million AI answers across roughly 3,000 brands. They captured every citation URL, every brand mention, every source.

Between mid-January and early March, average citations per answer on brand queries dropped 41%, from 4.95 to 2.96. By late March, citation counts had largely recovered, back to about 90% of the December baseline.

If you stopped there, you’d call it a temporary disruption from a model update and move on. That’s what most teams did.

But citation counts don’t tell the whole story. The composition changed during the dip, and it has stayed changed.

→ Product domains went from 55% of brand-query citations to 63% at the trough, and have held around 62%

→ Educational content dropped from 14% to under 10%

→ Review platforms (G2, Capterra, TrustRadius) climbed from 5% to about 7%

The model is going direct to source. When someone asks about a product, ChatGPT is more likely to cite that product’s own pages (pricing, comparison, documentation) than third-party content about it.

That alone is a meaningful shift for content teams who spent the last decade investing in educational top-of-funnel.

But the AirOps data showed something even more important:

Brand mentions per answer went up. Citation links went down.

The model is talking about brands more frequently in its answers while attaching fewer citation links to those mentions. Awareness is rising. Click-through is compressing.

If you only measure share of citation, you’ll miss the awareness gain. If you only measure brand mention count, you’ll miss the click collapse. Both signals are moving in opposite directions on the same surface.

That’s not a model-update artifact. That’s the new layer.

Profound’s data confirms it from another angle. Wikipedia alone accounts for 7.8% of all ChatGPT citations. The top three domains (Wikipedia, Reddit, TechRadar) control 22% of all citation share. ChatGPT referral traffic to brand sites has dropped 52% since July 2024. Reddit citations have grown 87% in the same window.

The citation pool is consolidating at the top. The click-through layer is thinning underneath. And the brands that win citations are increasingly the ones with structural decision-stage authority, not the ones with the biggest blog libraries.

What I saw firsthand

I lived a smaller version of this shift inside the Webflow growth team.

When AI chatbot referrals first started showing up in our analytics, the volume was tiny. A rounding error. The kind of channel you’d flag in a monthly review and move on from.

Two things changed quickly. First, the volume grew faster than any other channel we were tracking. AI referrals went from a rounding error to a real percentage of signups in months, not years. Last public number from Webflow: about 10% of total signups now come from AI chatbots. Some peer companies in B2B and developer tools are seeing similar or higher.

Second, and this is the part that mattered more, the conversion behavior of those users was different. They arrived deeper in the funnel than any other channel. They’d already compared us to alternatives inside the chat. They’d already gotten an opinion from the model about whether we were the right fit. The website was confirmation, not consideration.

That changed what content needed to do. Top-of-funnel education stopped earning its keep, because the model was synthesizing that content itself before the user ever clicked through. Comparison pages, product pages, pricing pages, the docs, the integration index, every decision-stage surface started doing more work than it ever had.

What I’m watching now is the same dynamic playing out faster, on a bigger surface, with paid mechanics on top. The brands that built decision-stage authority over the last 18 months are about to find out the auction was designed around them. The ones who didn’t are about to find out it wasn’t.

The window that explains the timing

Halliday published a stat on LinkedIn this week that almost no one has connected to the broader picture.

Between April 7 and 14, AirOps tracked ChatGPT serving roughly 2,813 sponsored cards per 100,000 searches. About 3% of queries returning a sponsored card.

After April 15: 47 per 100,000.

A 98% collapse, overnight. Held flat for three weeks since.

Halliday’s read was measured: OpenAI was testing behavior at scale, then pulled back to study the data before shifting to production monetization. That’s defensible.

I read it differently.

OpenAI didn’t lose its nerve on April 15. OpenAI calibrated the trust budget before opening the auction to self-serve.

To understand why, you have to understand the structural problem OpenAI is solving. They have two competing pressures inside the same interface.

On one side, an organic answer experience that has to remain trustworthy. ChatGPT works because people believe the answer isn’t bought. The moment that trust erodes, the entire product collapses. No amount of ad revenue replaces a broken trust contract.

On the other side, a monetization layer that has to generate enough revenue to fund the free tier, the compute, the model training, and the IPO narrative. OpenAI burned $8B in cash in 2025. Ads are the only realistic way to keep the free tier alive at 900M+ weekly users.

These two pressures share one finite resource: user trust.

Every decision OpenAI has made about the ads product is consistent with managing trust as a budget across both sides. The structural separation between ad-serving systems and the answer model. The clear “Sponsored” labeling. The age gates. The category restrictions on sensitive topics like health and politics. The privacy posture. The deliberate throttle on sponsored card frequency.

That April 15 collapse was OpenAI saying: we cannot let the auction run at 3% of queries while we open it up to thousands of new advertisers. The relevance bar has to tighten, the inventory has to be controlled, the trust hit has to be measured before we let volume scale.

Which means when self-serve opened on May 5, the auction was already pre-tuned for trust preservation, not impression maximization.

The framework

Ads are the wrong frame for what’s happening.

What’s happening is four ranking decisions converging into one system:

Retrieval: which content gets pulled into the answer

Citation: which content gets linked in the response

Action: which Apps get surfaced for the user to take next steps

Monetization: which ads appear alongside

OpenAI has shipped the infrastructure for all four in the last 18 months. The Apps SDK. The shopping research feature. The Responses API. The Conversions API. Self-serve ads.

These are not separate launches. They are one converging system with one finite input: user trust.

That makes the auction inside ChatGPT structurally different from Google’s or Meta’s.

Google’s auction prices keyword intent. Meta’s auction prices audience attention. ChatGPT’s auction has to price relevance inside a trust-constrained answer surface, where any contamination of the organic experience destroys the value of the paid one.

In a trust-constrained system, paid pricing follows organic authority. Brands the model already trusts to mention will clear the relevance bar cheaply. Brands it doesn’t trust can pay, but get gated by a quality system designed to protect the answer surface from low-relevance placement.

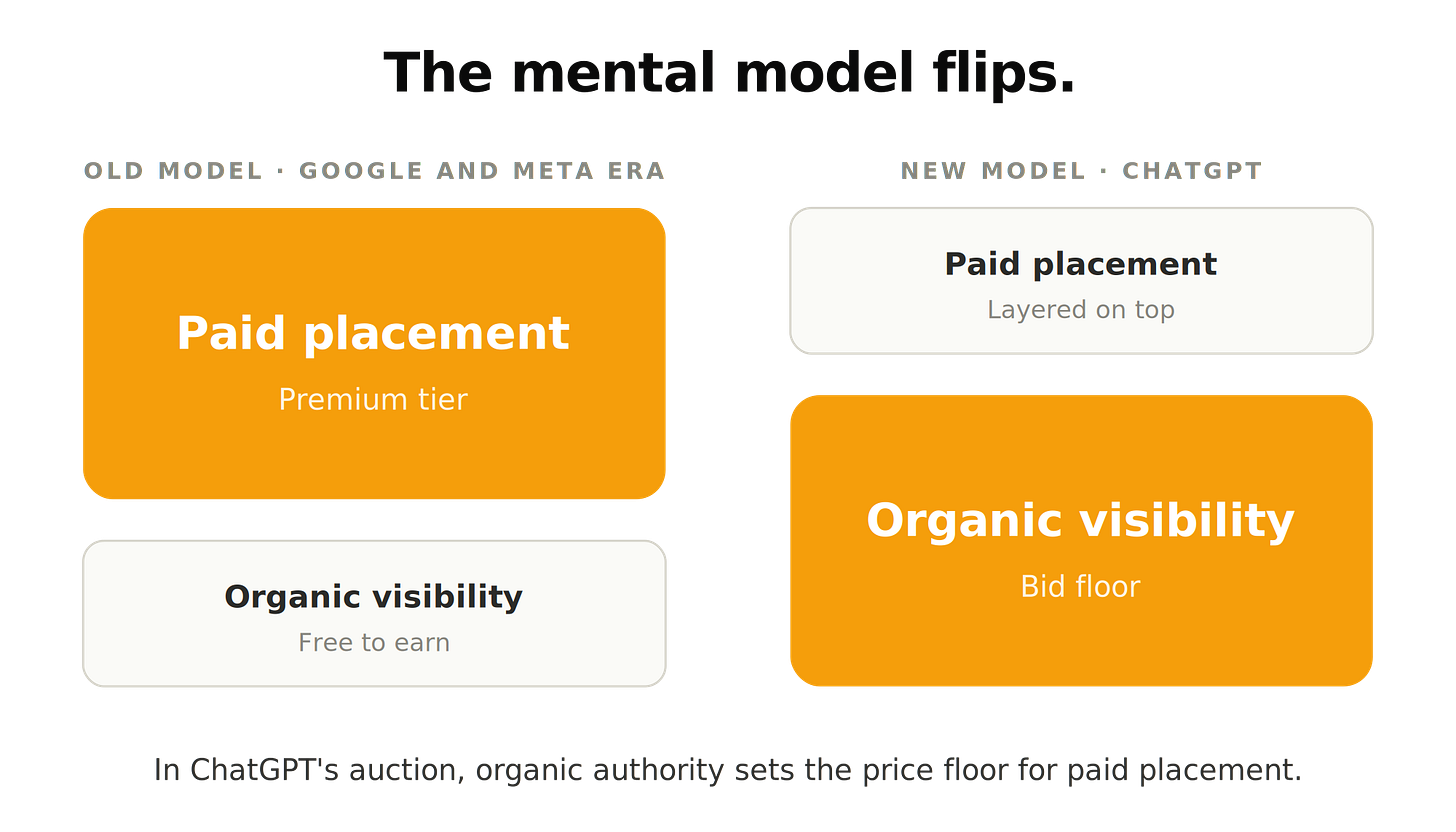

The mental model flips

For twenty years, the mental model for marketers has been:

→ Organic visibility is free. You earn it through content, links, and trust signals.

→ Paid placement is the premium. You buy it when you can’t earn it organically.

Inside ChatGPT, that model inverts.

→ Organic visibility is the BID FLOOR. The price you pay to enter the auction at all.

→ Paid placement is the premium on top of that floor. The less organic authority you have, the more you pay per click.

This is the part nobody has named yet. It’s the exact dynamic Google’s Quality Score has produced in paid search for a decade. Brands with strong relevance signals pay 3-5x less per click than brands with weak ones. Same auction, different effective CPCs based on the underlying quality bar.

ChatGPT’s auction is a Quality Score system on a conversational surface, gated by an even stricter trust function because the placement sits adjacent to a trusted answer.

If you spent the last 18 months investing in Answer Engine Optimization, earning citation share on your category queries, building decision-stage authority, structuring content for retrieval, you’ve been building the foundation that determines your paid economics on this channel.

If you skipped AEO and assumed you’d buy your way into ChatGPT placements when the time came: the bill is coming due in the ad auction.

What to do before the wave hits

Three moves matter right now.

First, measure your Answer Capture Rate. Not just whether your brand gets mentioned. Whether it gets cited. Whether it gets recommended. Whether it gets surfaced as the answer to the highest-intent prompts in your category. Most teams are measuring share of mention. The right metric is share of decision.

Second, audit your decision-stage authority across the surfaces that feed the model. Product pages. Comparison pages. G2, Capterra, TrustRadius profiles. Reddit threads where your category gets discussed. The pages cited in AI Mode and ChatGPT Search for “best [your category]” prompts. If you’re not in those, you’re outside the pool the auction is going to price from.

Third, plan for the inversion. The paid budget you set aside for ChatGPT ads should be a function of your organic visibility, not a substitute for it. Brands with thin AEO foundations should expect to pay 2-3x more for the same placement than brands with strong ones. Build the foundation or factor the premium into your forecasts.

This is what Answer Ownership was built for. Answer Capture Rate is how you measure it.

Search monetized attention.

AI monetizes trusted resolution.

In 12 months, the brands with high organic citation share will be paying 2-3x less for placement in the same conversations. The ones who skipped AEO are about to learn that the auction has been priced against them for the last 12 months. They just haven’t seen the invoice yet.

That’s the call I’m staking. Disagree if you see it differently. I’m going deep on this over the next few weeks.

That's fascinating, thanks for sharing, Josh!